Top AI Companies Agree to Pentagon Deals for Classified Work

The Defense Department finalized agreements with major AI and cloud players to use their systems in classified settings.

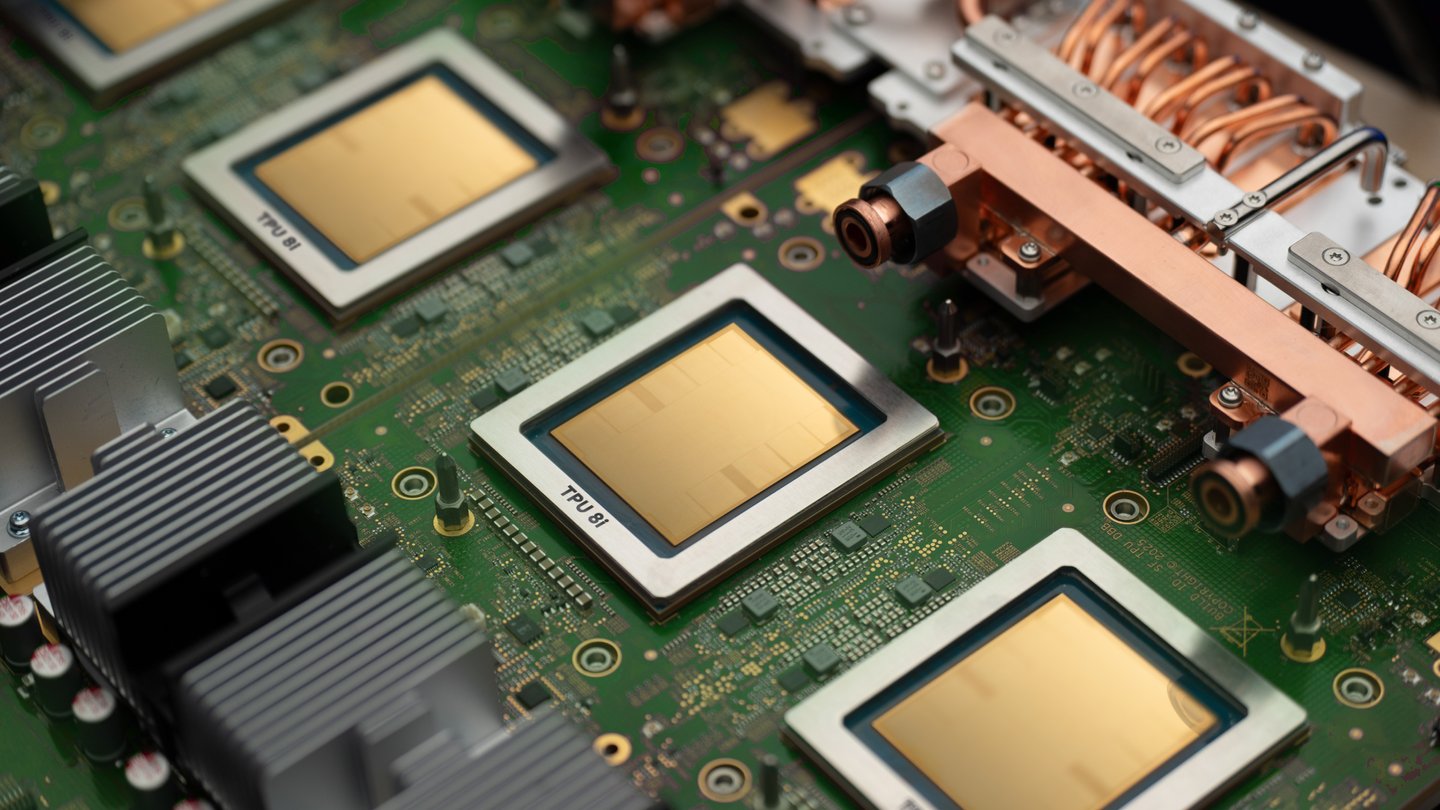

Coverage of Google, Alphabet, DeepMind, the Gemini models, and TPU chips.

54 stories · Updated 2026-05-01

The Wall Street Journal argues that Microsoft, Amazon, Meta, and Alphabet are ramping capital spending for AI fast enough that depreciation is starting to bite, raising pressure to translate AI demand into durable profit.

Ars Technica argues Google’s Gemini rollout across Workspace makes opting out confusing, even as Google stresses limits on how Workspace content is used for model training.

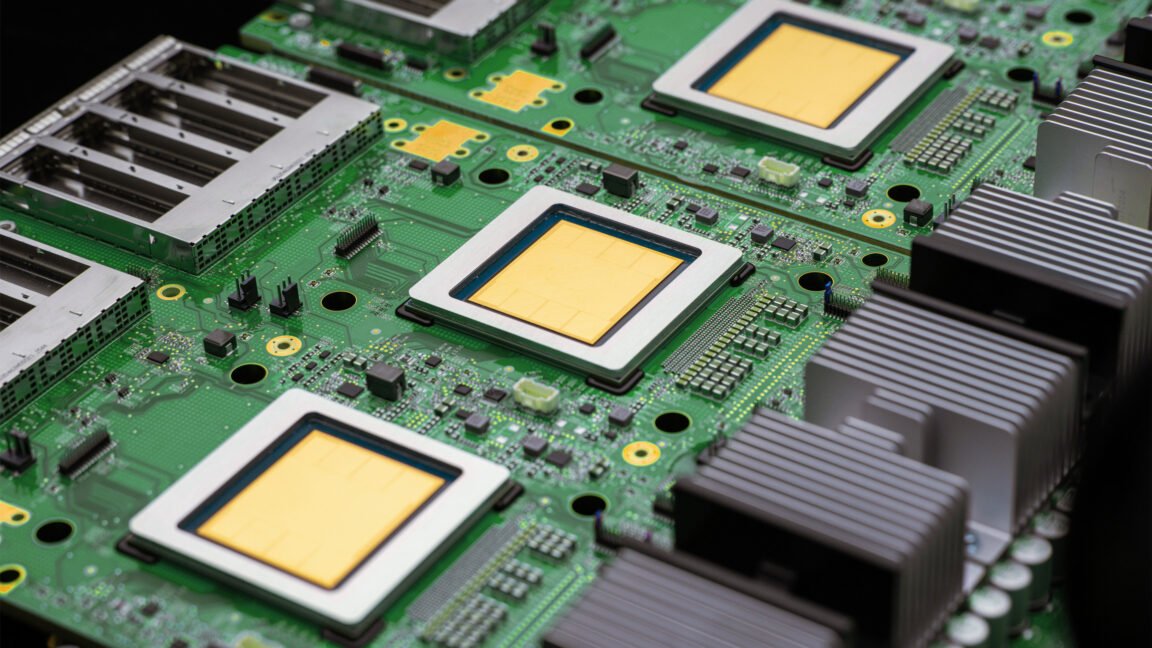

The company says the new TPU designs target faster frontier-model training and improved efficiency across the AI lifecycle.

A report says the agreement expands DoD access to Google’s models, amid employee concerns about military AI uses.

Google’s open-model line gets a major update, with a more permissive license aimed at broader commercial and research use.

A new contract expands Google’s AI availability on classified networks, following Anthropic’s push for tighter guardrails.

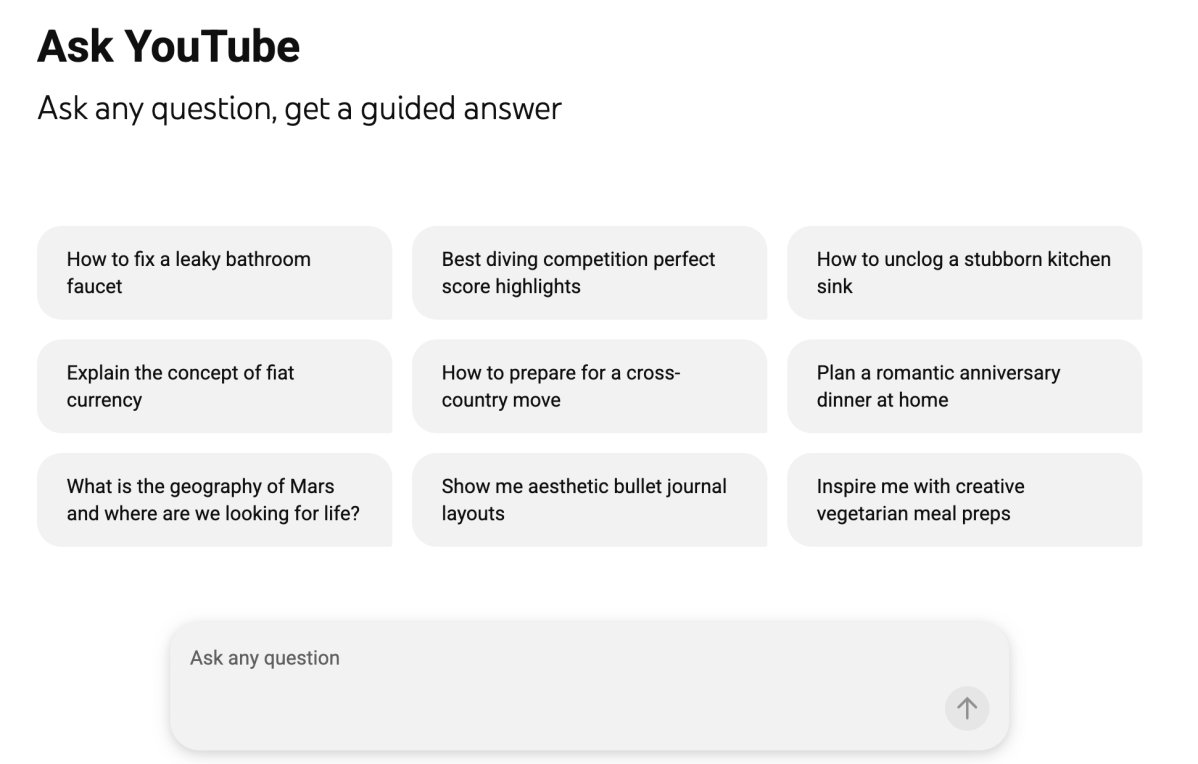

The new YouTube experiment blends video results with AI-generated summaries and follow-up Q&A, initially limited to U.S. Premium users.

Former DeepMind reinforcement learning leader David Silver’s new lab says it wants to build a 'superlearner' that acquires skills without human datasets.

Alphabet will inject $10 billion immediately at a $380 billion valuation, with $30 billion more contingent on Anthropic hitting performance benchmarks.

The Chinese AI lab's V4 Pro model tops 1.6 trillion parameters, claims near-parity with frontier models on reasoning, and costs a fraction of GPT-5.5 or Claude Opus.

With four of the largest tech companies planning to spend nearly $700 billion on AI this year, economists are questioning whether the jobs being eliminated will ever return at the same scale.

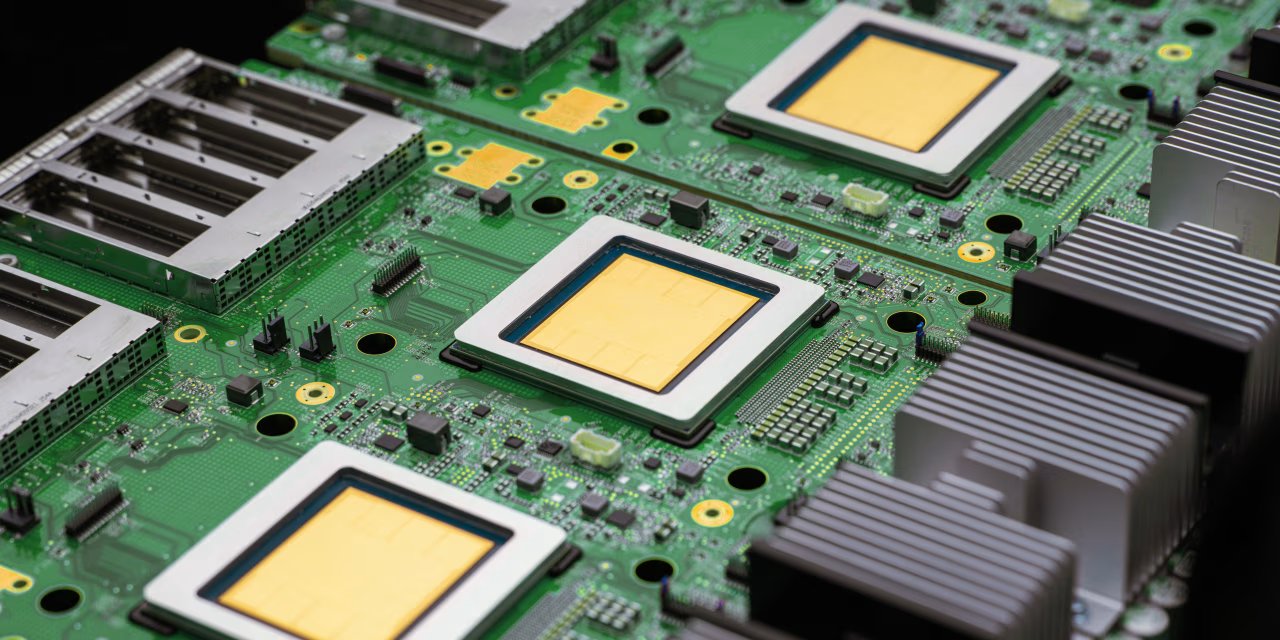

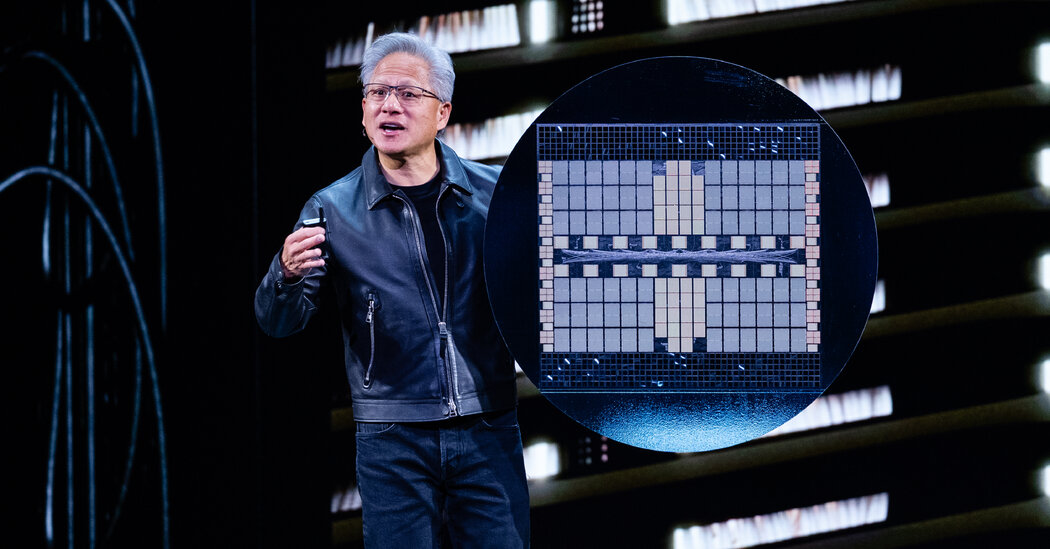

First-quarter revenue of $13.6 billion topped Wall Street estimates by more than $1 billion, driven by a 22% jump in the Data Center and AI segment, sending shares up more than 20%.

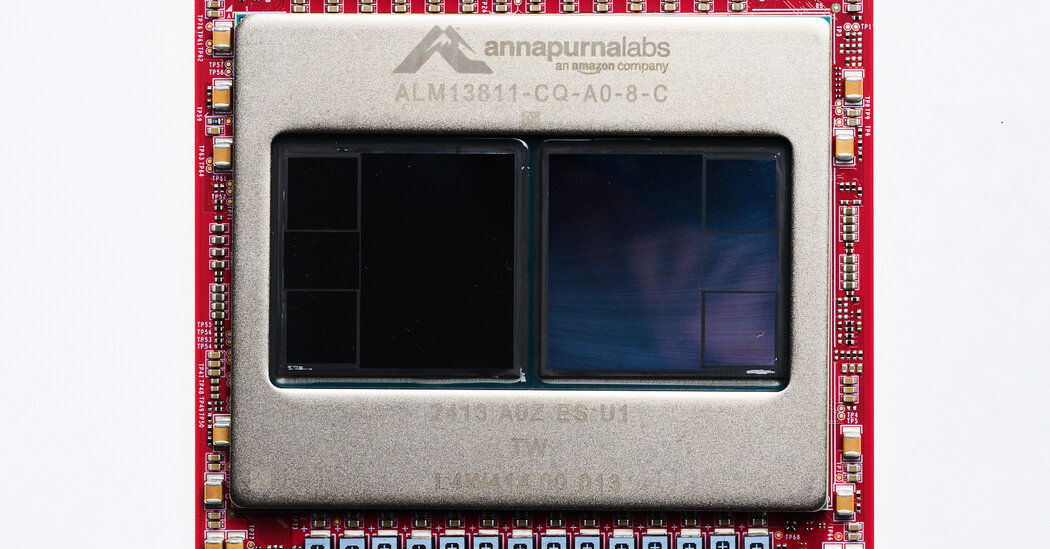

The Wall Street Journal reports Alphabet unveiled a processor aimed at running AI models (inference), highlighting demand growth as companies deploy generative AI systems.

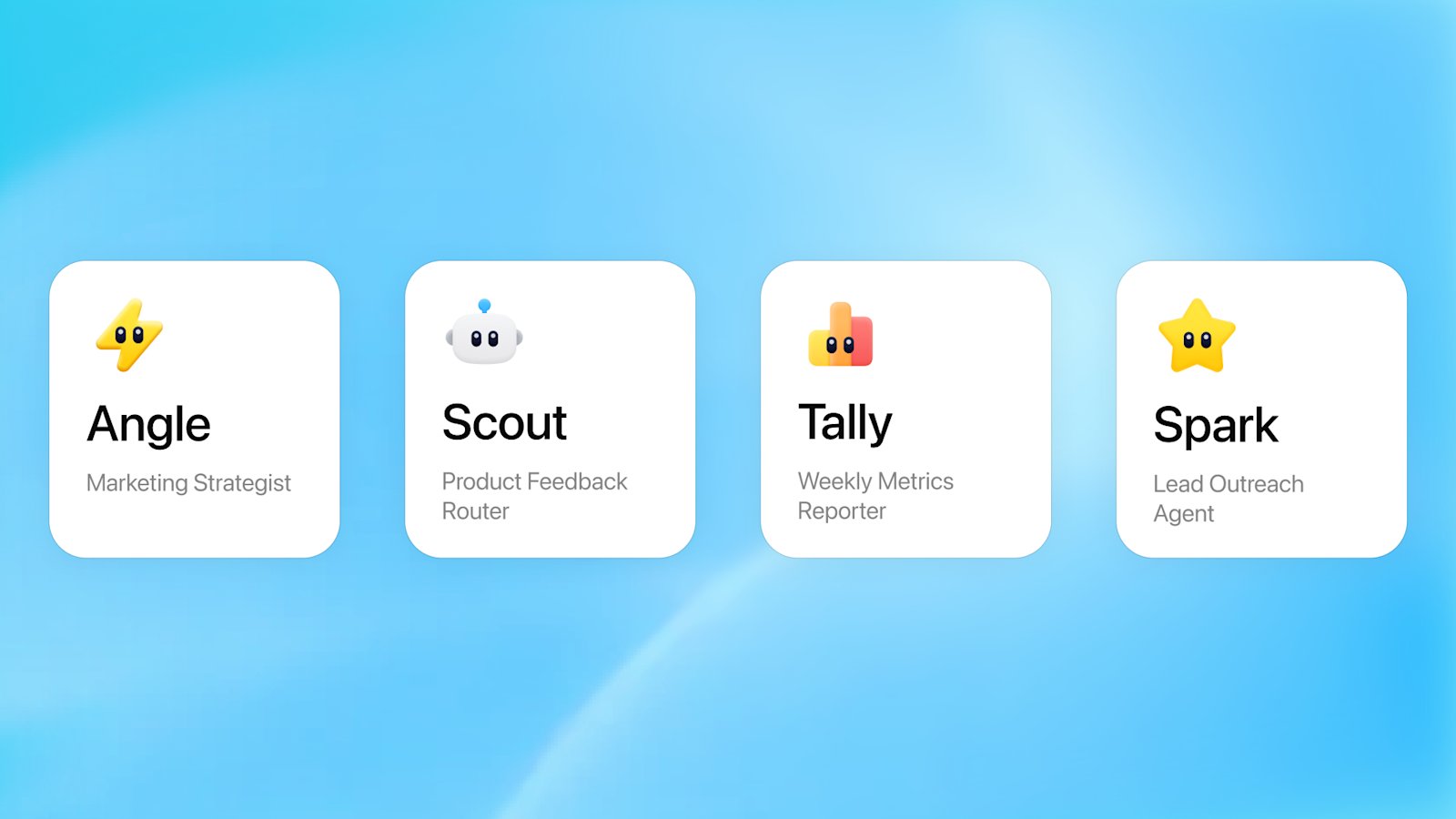

The Codex-powered agents run continuously in the cloud, integrate with Slack and Salesforce, and allow organizations to share and schedule automated workflows across teams.

The TPU 8t delivers 2.8 times the compute of its predecessor for model training, while the TPU 8i offers a 9.8-times improvement per pod for agentic inference — and both will be available from Google Cloud later in 2026.

At its annual Las Vegas conference, Google consolidated its AI products under a unified enterprise agent brand and announced a $750 million fund to help cloud partners sell AI agents to businesses.

The new model generates accurate multilingual text, high-fidelity marketing assets, and multi-panel comics — and is available to all ChatGPT users starting Tuesday.

The sweeping new deal with Amazon, which builds on $8 billion in prior investment, turns Anthropic into one of Amazon's most significant cloud and chip customers alongside its role as a portfolio company.

The deal includes a $5 billion upfront commitment plus $20 billion tied to milestones, and requires Anthropic to spend $100 billion on AWS over the next decade.

The $15 million addition brings in a new cohort of think tanks including American Compass, CSIS, the Urban Institute, and Chile's CENIA to examine AI's effects on labor markets, infrastructure, and democratic institutions.

Federal regulators and senior officials, including Federal Reserve Chair Jerome Powell, privately cautioned bank CEOs about the potential dangers of Anthropic's restricted frontier model, as JPMorgan and Morgan Stanley were permitted to test it for defensive security purposes.

Internal investor documents reveal ChatGPT's early ad pilot crossed $100 million in annualized revenue within six weeks, with projections scaling to $11 billion by 2027 and $53 billion by 2029.

The agreement gives Anthropic a new tranche of Nvidia-powered data center capacity in the US, as demand for Claude continues to surge past a $30 billion annual run rate.

The surge in installs suggests Muse Spark may have revived consumer interest in Meta's AI product, which has lagged well behind ChatGPT, Claude, and Gemini.

The partnership gives Intel a crucial validation of its data center chip roadmap at a moment when the company is fighting to stay relevant against Nvidia and custom silicon.

Wired's analysis finds the model represents a genuine qualitative leap over Llama 4, but Meta's shift away from open-source by default marks a strategic inflection point worth watching.

Built from scratch by the team Zuckerberg assembled after being disappointed by Llama 4, Muse Spark benchmarks favorably against models from OpenAI, Anthropic, and Google in early evaluations.

The experimental app runs speech recognition entirely on-device without a subscription, competing directly with Wispr Flow and other AI transcription tools.

The restricted AI model can autonomously find critical vulnerabilities across major operating systems and browsers — and Anthropic is keeping it locked away from the public for safety reasons.

The three competing AI labs are sharing intelligence to detect adversarial distillation — a practice where foreign actors extract proprietary capabilities from frontier models without authorization.

The Nvidia manufacturing partner's quarterly results signal that hyperscaler appetite for AI compute hardware remains robust despite geopolitical headwinds.

The MAI Superintelligence team's first commercial model releases beat competitors on key benchmarks while undercutting OpenAI and Google on price.

The three tech giants are committing to gigawatts of gas generation capacity to feed insatiable AI energy demand, raising questions about long-term resource and climate exposure.

The acquisition accelerates Anthropic's push into life sciences, adding a ten-person team of computational drug discovery veterans to its health division.

Google's monthly roundup confirms a Gemini platform that is becoming more deeply personalized, more globally accessible, and more competitive with real-time audio rivals like GPT-4o voice.

Oracle employees across the US, India, Canada, and Mexico received termination emails on March 31, as the company redirects billions toward AI cloud infrastructure at the cost of its headcount.

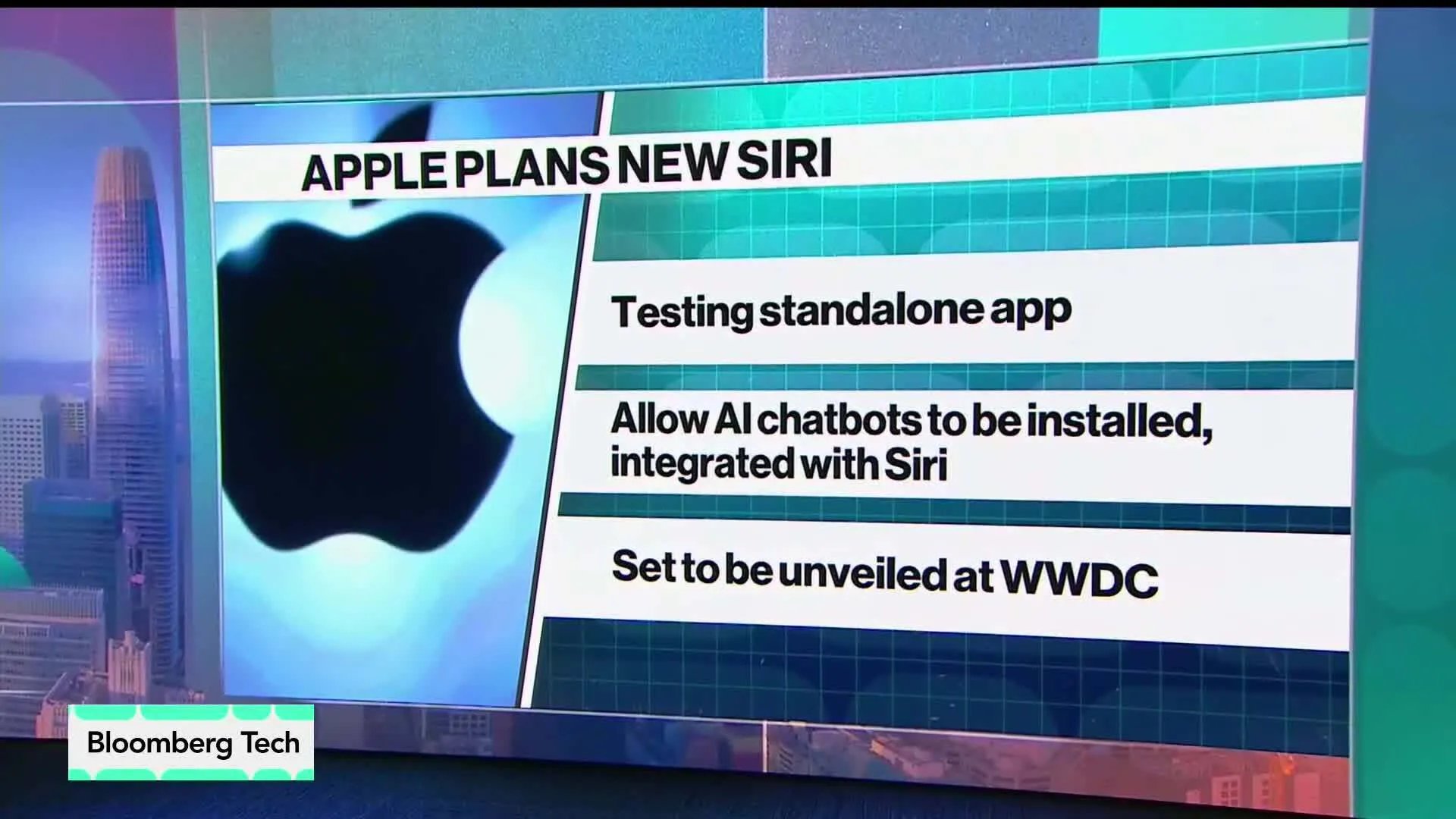

Mark Gurman's Power On newsletter reveals Apple is reframing its AI strategy around platform ownership rather than model development, while paying rare bonuses to retain iPhone designers.

Google's monthly Gemini update lets users import chat history from rival AI apps and introduces a new audio model fast enough to sustain real-time conversation in over 200 countries.

The iPhone maker will let ChatGPT, Gemini, and other third-party models plug directly into Siri, reframing Apple as an AI platform rather than a single-model gatekeeper.

After Instant Checkout stumbled due to stale product data and limited merchant adoption, OpenAI is shifting to a model where purchases redirect to retailers' own websites via in-app browsers inside ChatGPT.

From Anthropic's Pentagon battle to the data center construction boom and hardware price inflation, a roundup of the defining AI narratives through mid-March finds the industry at a pivotal inflection.

The social media giant's accelerated silicon roadmap targets both ranking and generative AI inference, with all four chip generations deploying within two years at a pace far faster than industry norms.

Silicon Valley is watching closely as the first-ever supply-chain risk designation against a US domestic company tests whether the executive branch can penalize firms for their AI safety positions.

The deal brings automated red-teaming and vulnerability detection into OpenAI's Frontier enterprise platform, used by more than a quarter of Fortune 500 companies.

The Claude maker filed two federal complaints challenging the DOD's unprecedented blacklisting of an American AI company, citing First Amendment violations.

The Turing Award winner's new startup, backed by Nvidia, Samsung, and Bezos Expeditions, is betting that world models will surpass large language models for real-world applications.

The job reductions will affect divisions across the company and may begin this month, with some cuts targeting roles Oracle expects AI to make redundant.

The update to ChatGPT's default model targets tone and conversational flow, reducing unsolicited emotional reassurances that frustrated users for months.

The fastest and cheapest model in Google's Gemini 3 series launches in preview at $0.25 per million input tokens, targeting cost-sensitive large-scale deployments.

A comprehensive analysis of AI infrastructure commitments finds hyperscalers planning nearly $700 billion in 2026 data center spending, with Stargate construction underway in Texas and Oracle locking in $300 billion in compute contracts.

Google's monthly Gemini Drop update confirms the general consumer rollout of Gemini 3.1 Pro alongside a new AI music creation tool and an upgraded fast image model for Gemini app subscribers.

Nvidia's fiscal fourth quarter delivered its most profitable period ever, surpassing the quarterly earnings of Apple, Microsoft, and Alphabet, with data center revenue surging 75% year-over-year to $62.3 billion.

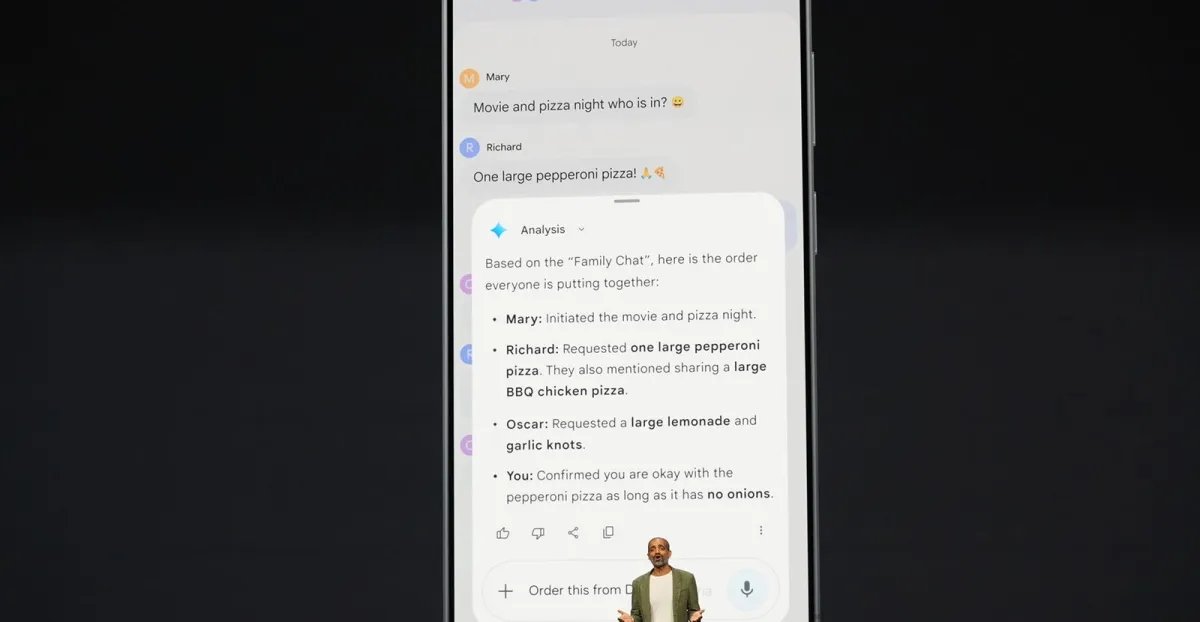

At Samsung's Galaxy S26 Unpacked event, Google demonstrated Gemini's ability to autonomously complete multi-step tasks — like analyzing group chats and ordering food through apps — a capability Apple promised for Siri in 2024 but has yet to ship.