Meta Unveils Four Generations of Custom MTIA AI Chips in Bid to Reduce Reliance on Nvidia

The social media giant's accelerated silicon roadmap targets both ranking and generative AI inference, with all four chip generations deploying within two years at a pace far faster than industry norms.

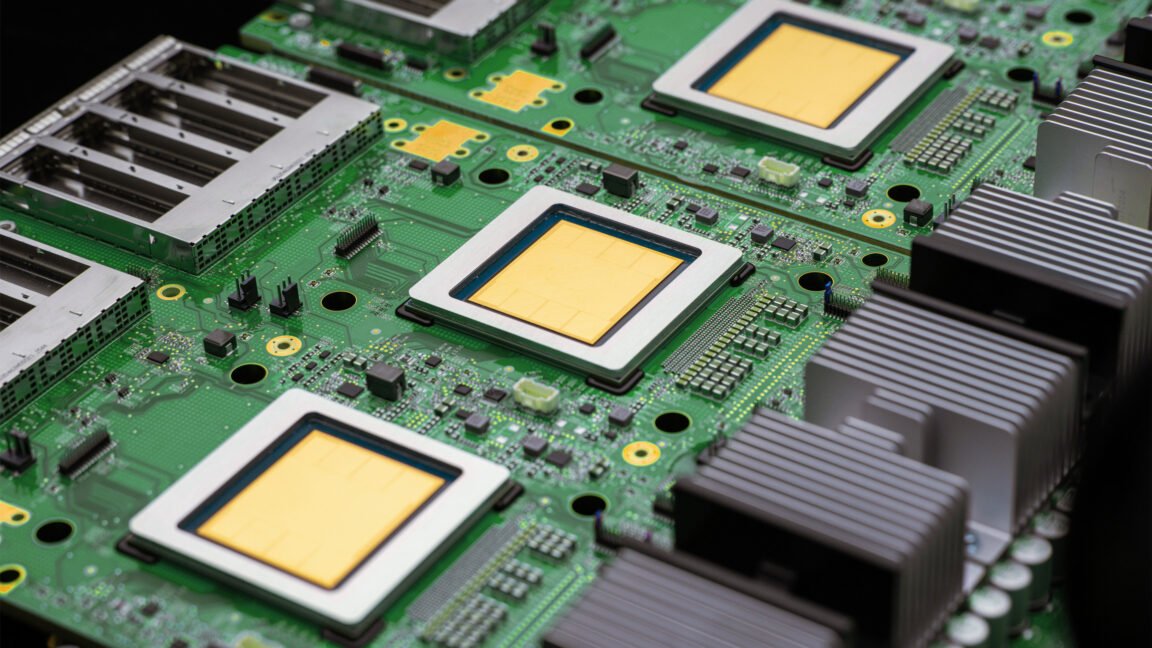

Meta announced on Tuesday that it is developing and deploying four new generations of its custom Meta Training and Inference Accelerator chips within the next two years — a pace the company described as significantly faster than typical chip cycles. The roadmap covers the MTIA 300, 400, 450, and 500, each designed to bring improvements in compute, memory bandwidth, and efficiency across Meta's AI infrastructure.

The MTIA 300 is already in production and focused on ranking and recommendations training — the systems that determine what content users see across Facebook, Instagram, and Threads. The MTIA 400, 450, and 500 are designed to handle all workload types but will be deployed primarily for generative AI inference, with mass production expected through 2027.

Meta said the MTIA 400 is its first chip that offers raw performance competitive with leading commercial products from Nvidia and AMD, though the company declined to specify which benchmarks formed the basis of that comparison.

A key design philosophy distinguishing Meta's approach from mainstream chip development is its inference-first focus. While most GPU roadmaps are optimized for training large models, the MTIA 450 and 500 were designed specifically for the demands of serving generative AI to billions of users at scale — a workload that differs substantially from training in its memory access patterns and latency requirements.

The chips share a common foundational infrastructure, allowing Meta to swap in newer generations without overhauling its server architecture.

Meta deploys hundreds of thousands of MTIA chips for inference across its applications, making the chip program one of the largest internal silicon deployments in the industry. The company has entered multi-year, multi-generational supply agreements with both Nvidia and AMD in parallel, indicating that custom silicon is intended to supplement rather than fully replace third-party GPUs in the near term.

The announcement positions Meta alongside Google, Amazon, and Microsoft as hyperscalers pursuing vertical integration in AI hardware. Custom chips allow these companies to reduce their 'compute tax' — the premium paid to Nvidia and AMD — while tailoring hardware more precisely to their specific AI workloads, which differ in important ways from general-purpose training demands.

Read the original reporting at Meta Newsroom.