Google's Eighth-Generation TPUs Split Training and Inference Into Two Purpose-Built Chips

The TPU 8t delivers 2.8 times the compute of its predecessor for model training, while the TPU 8i offers a 9.8-times improvement per pod for agentic inference — and both will be available from Google Cloud later in 2026.

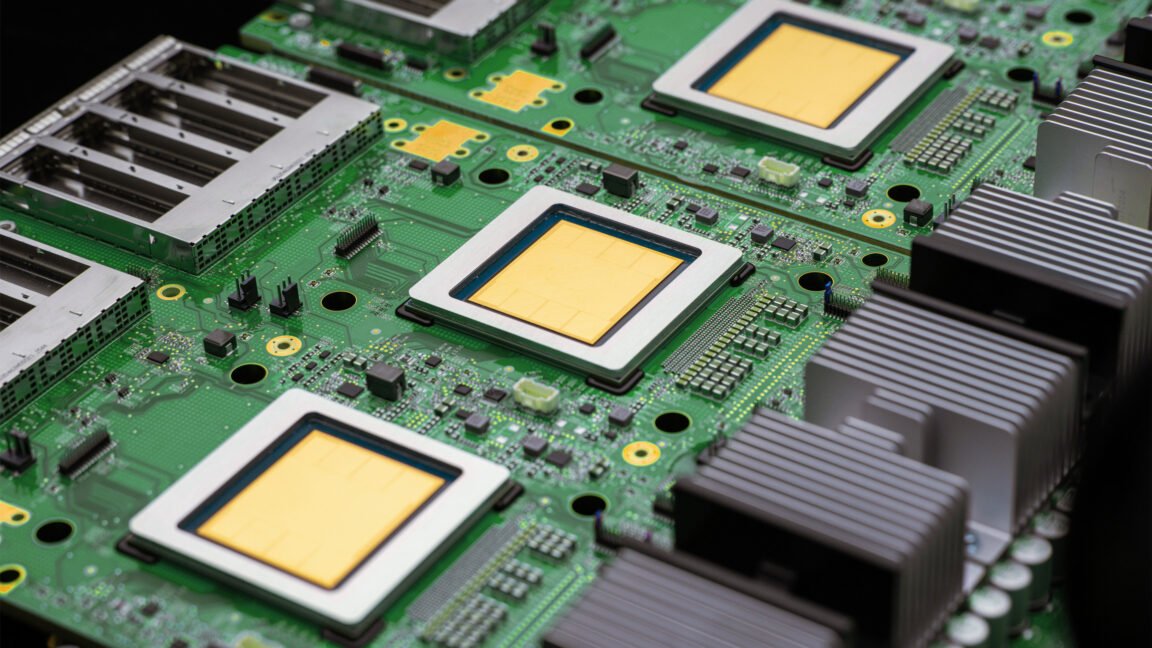

Google unveiled its eighth-generation Tensor Processing Units at a private preview event during Google Cloud Next 2026 in Las Vegas on April 22, introducing two distinct chip designs that the company says represent the first time it has purpose-built separate silicon for training and for agentic inference. The split reflects how differently the two workloads stress AI hardware and signals Google's intent to sharpen its cost advantage over vendors that rely on Nvidia GPUs for both tasks.

TPU 8t, optimized for frontier model training, delivers 2.8 times the FP4 compute per pod compared to Ironwood, the seventh-generation TPU released in 2025. It doubles bidirectional scale-up bandwidth to 19.2 terabits per second per chip and quadruples scale-out networking to 400 gigabits per second per chip.

Clusters of 8t chips can scale to beyond one million TPUs in a single training job through a new interconnect Google is calling Virgo networking. A feature called TPU Direct Storage removes the CPU from the data path, accelerating long training runs significantly.

TPU 8i, designed for low-latency agentic inference, delivers 9.8 times the FP8 compute per pod and 6.8 times the HBM memory capacity compared to Ironwood. Pod size grows from 256 to 1,152 chips.

The chip is designed for production agents that must reason, plan, and execute multi-step tasks in real time, use cases that demand large memory capacity alongside fast sampling.

Both chips are expected to reach general availability from Google Cloud later in 2026. Google's performance claims are self-reported and will face independent evaluation from enterprise cloud customers and third-party analysts in subsequent quarters.

For enterprise buyers currently running training and inference on shared accelerators, the new chips offer the first generation of silicon that treats those workloads as structurally distinct problems.

Read the original reporting at VentureBeat.