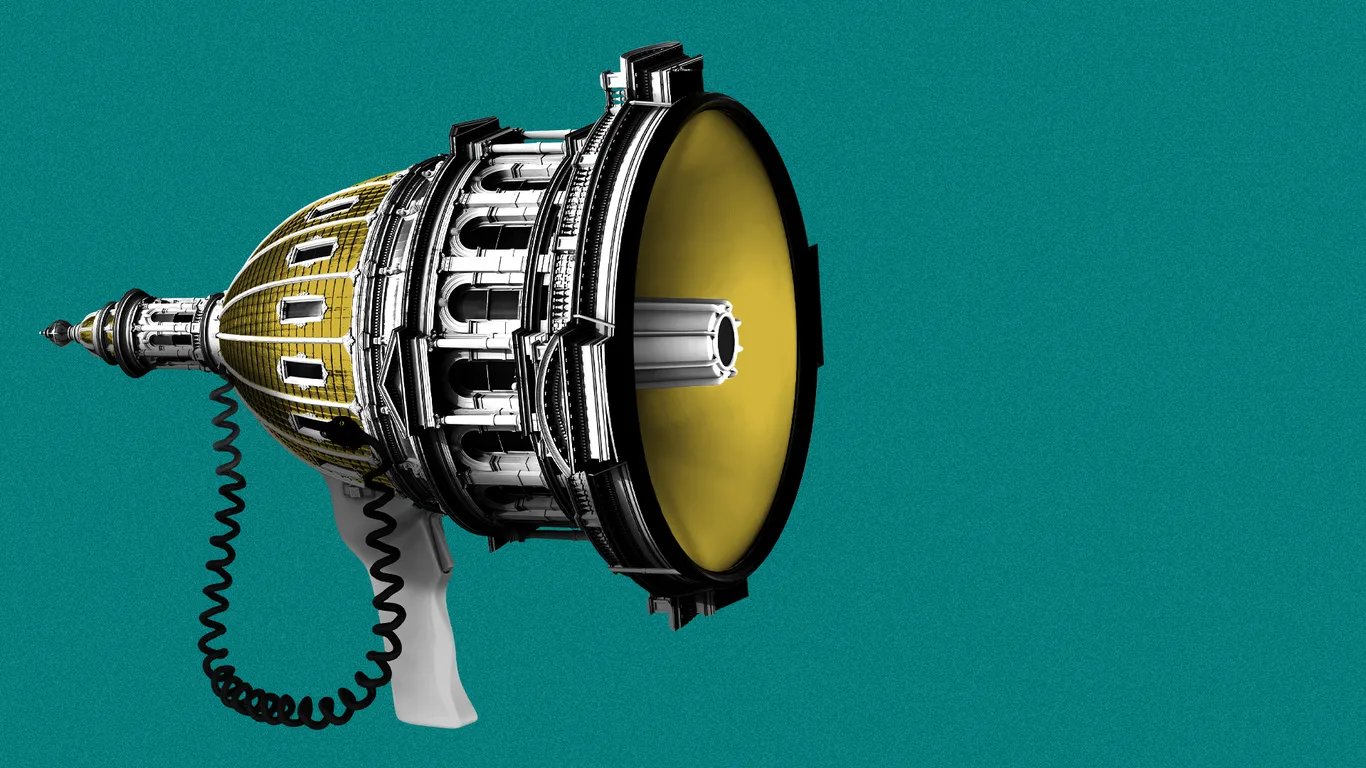

Anthropic's Pentagon Battle Sets a Dangerous Precedent for Every AI Company With Government Clients

Silicon Valley is watching closely as the first-ever supply-chain risk designation against a US domestic company tests whether the executive branch can penalize firms for their AI safety positions.

The designation of Anthropic as a supply-chain risk by the Department of Defense has sent shockwaves through Silicon Valley, raising questions that go well beyond Anthropic's own legal fight. For the first time in history, a domestic American technology company has been labeled with a classification previously reserved for foreign adversaries suspected of cybersecurity threats or national security risks.

The designation requires any company doing business with the Pentagon to certify that it does not use Anthropic's models for military-related work.

For AI companies with government contracts, the implications are difficult to overstate. Anthropic's refusal to allow Claude to be used for fully autonomous weapons without human oversight, or for mass surveillance of American citizens, was treated by the Defense Department not as a business disagreement but as a national security threat.

The supply-chain risk label was applied swiftly, triggered by Defense Secretary Pete Hegseth's directive following a breakdown in contract negotiations over those conditions.

Microsoft, Google, and Amazon Web Services each moved quickly to clarify that they would continue offering Anthropic's Claude to non-defense customers. A Microsoft spokesperson said the company's legal team concluded that the designation applies narrowly to the use of Claude in direct Pentagon contracts, not to broader commercial use by defense contractors across unrelated projects.

Anthropic CEO Dario Amodei echoed that interpretation, arguing the designation had a narrow scope limited to specific military-related deployments.

Still, the downstream consequences are significant. The General Services Administration terminated its OneGov contract with Anthropic, removing Claude from all three branches of the federal government.

Several other agencies, including the Treasury Department and State Department, reportedly discontinued or announced plans to discontinue Anthropic services. A handful of Anthropic's commercial clients have begun exploring backup options amid uncertainty about future enforcement.

Legal experts watching the case say the central constitutional question — whether the government can use procurement power to punish a company for publicly expressing views about its own AI's limitations — has no clear precedent. The outcome will likely shape how AI companies communicate safety policies, handle government contract negotiations, and assess the risk of building safety guardrails into publicly stated product terms for years to come.

Read the original reporting at WIRED.