Anthropic Sues Pentagon Over 'Supply-Chain Risk' Designation, Calling It Unconstitutional Retaliation

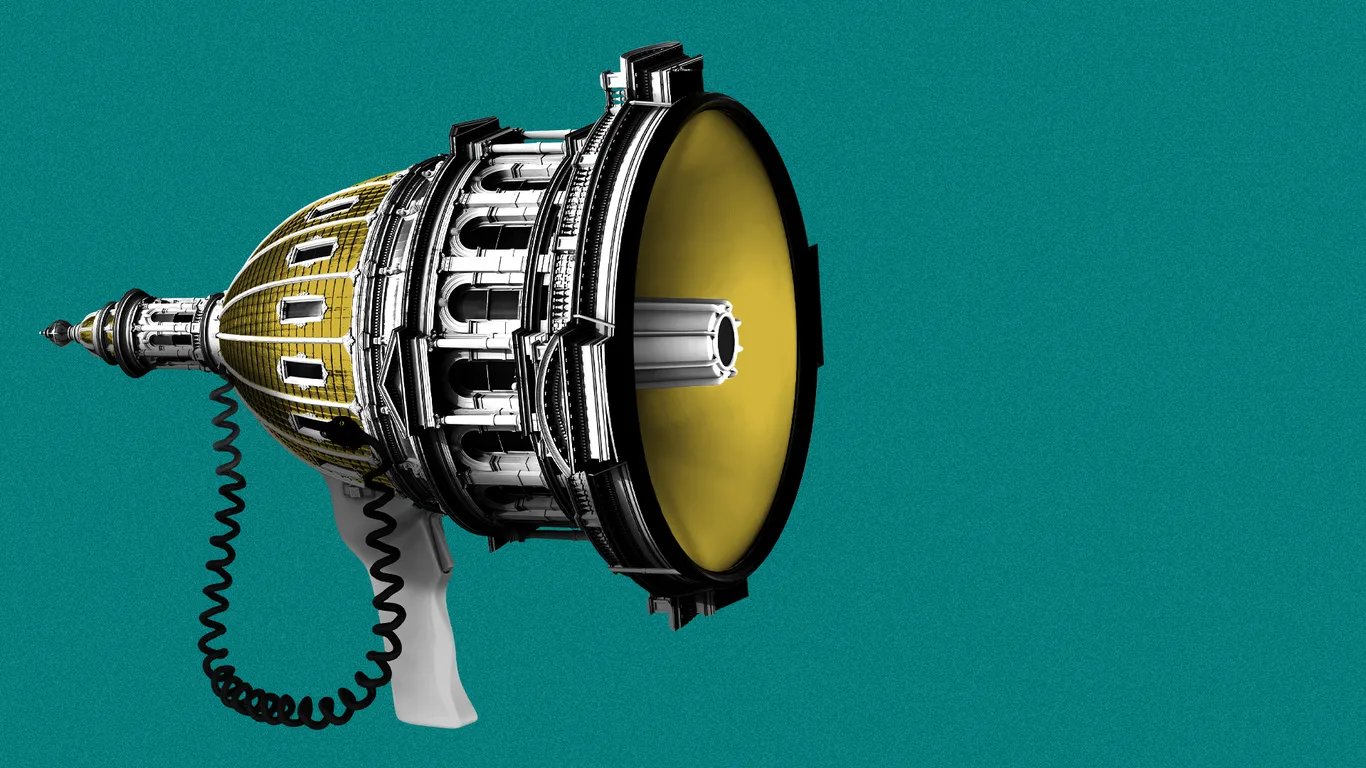

The Claude maker filed two federal complaints challenging the DOD's unprecedented blacklisting of an American AI company, citing First Amendment violations.

Anthropic filed two federal lawsuits against the Department of Defense on Monday, making good on its promise to challenge the Pentagon's decision to label the company a supply-chain risk — a designation typically reserved for foreign adversaries. The complaints were lodged in a California district court and the D.C.

Circuit Court of Appeals simultaneously, after the Defense Department formally notified Anthropic of the designation late the prior week.

The conflict stems from months of negotiations between the Pentagon and Anthropic over the conditions under which the military could use its Claude AI models. Anthropic drew two firm red lines: its technology would not be used for mass surveillance of American citizens and was not ready to power fully autonomous weapons without human oversight of targeting and firing decisions.

Defense Secretary Pete Hegseth demanded unrestricted access to Claude for any lawful purpose, and after talks collapsed, the administration escalated, with President Trump directing all federal agencies to cease using Anthropic's products within six months.

In its California complaint, Anthropic called the actions 'unprecedented and unlawful,' arguing that the government was using the power of the state to punish a company for protected speech. 'The Constitution does not allow the government to wield its enormous power to punish a company for its protected speech,' the lawsuit reads.

The company also argued no federal statute authorized the steps taken, contending the DOD failed to follow required procedures before imposing a supply-chain risk label, including notifying Anthropic and allowing it to respond.

The blacklisting carries significant financial consequences. The General Services Administration terminated Anthropic's OneGov contract following the designation, cutting off services to all three branches of the federal government.

The company faces the potential loss of hundreds of millions of dollars in annual government revenue, and several Pentagon contractors began exploring alternative AI vendors. Major cloud providers Microsoft, Google, and AWS each confirmed they would continue offering Claude to non-defense customers, noting their legal teams found the designation narrowly applicable to direct Pentagon contracts.

Anthropic said it remained committed to pursuing every path toward resolution, including continued dialogue with the government. The case, which could take months to resolve, is being watched closely across Silicon Valley as a test of whether an AI company can be penalized by the executive branch for its publicly stated safety positions.

Read the original reporting at TechCrunch.