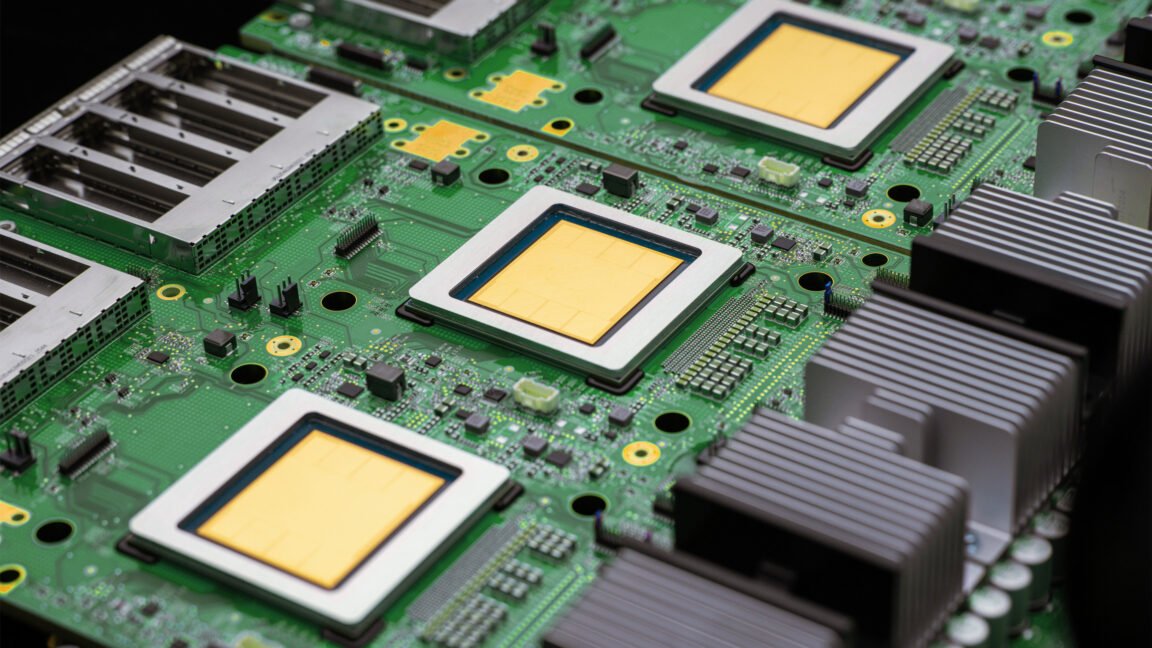

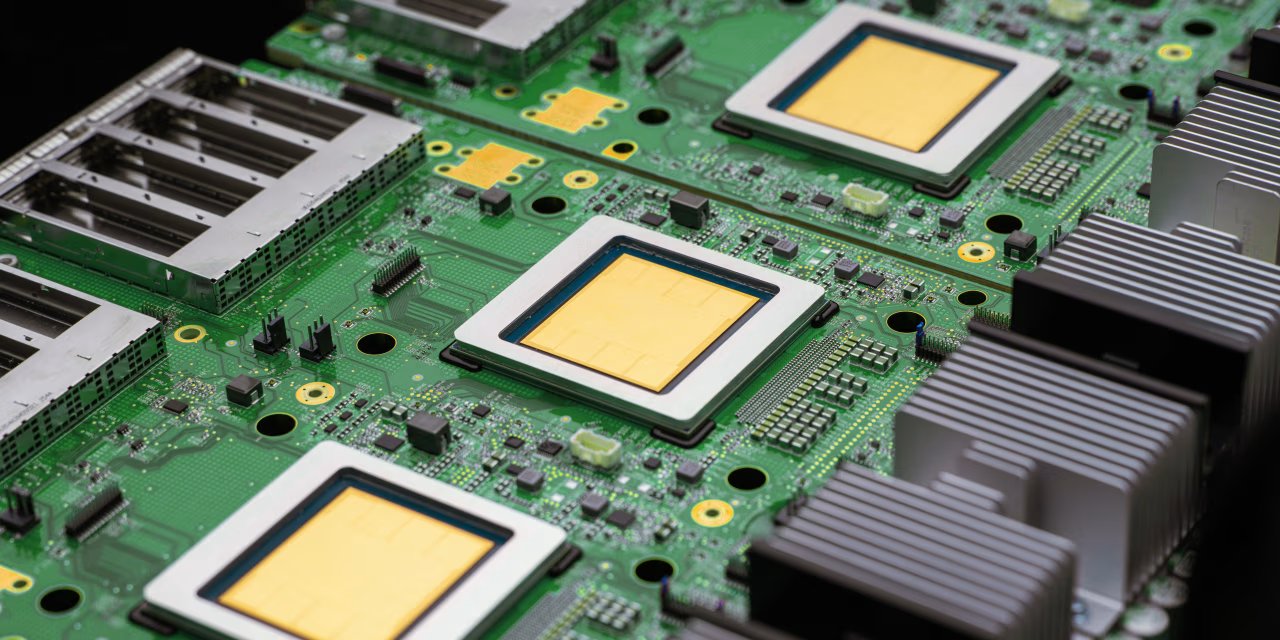

WSJ: Google introduces a new inference-focused TPU chip as AI chip race shifts beyond training

The Wall Street Journal reports Alphabet unveiled a processor aimed at running AI models (inference), highlighting demand growth as companies deploy generative AI systems.

Google has rolled out a new processor designed for inference — the computing work of querying AI models — as demand rises for systems that can generate code and execute tasks, The Wall Street Journal reports. The move underscores how the AI chip competition is expanding beyond training accelerators toward efficiency and throughput for deploying models at scale.

Read the original reporting at The Wall Street Journal.