White House Releases National AI Policy Framework, Seeks to Preempt State Laws

The Trump administration's six-part legislative blueprint calls on Congress to block state-level AI regulations, limit AI developer liability, and establish uniform federal standards to prevent a regulatory patchwork.

The Trump administration on Friday released a National Policy Framework for Artificial Intelligence, presenting Congress with a legislative blueprint intended to establish a single federal standard governing the development and deployment of AI across the United States. The framework, which is nonbinding on its own, calls on lawmakers to preempt state-level AI laws that the administration argues impose undue burdens on innovation and create a fragmented regulatory landscape that weakens the country's competitive position relative to China.

The six-part outline covers a wide range of areas: child safety online, intellectual property and copyright, protection of free speech from government-driven censorship, data center permitting and energy requirements, AI workforce development, and — most contentiously — federal preemption of state AI regulation. The White House argued that AI development is inherently interstate in nature and tied to national security and foreign policy, making it inappropriate for individual states to impose their own requirements on model developers.

The framework also calls for limiting the liability of AI developers for harms caused by third parties who misuse their products.

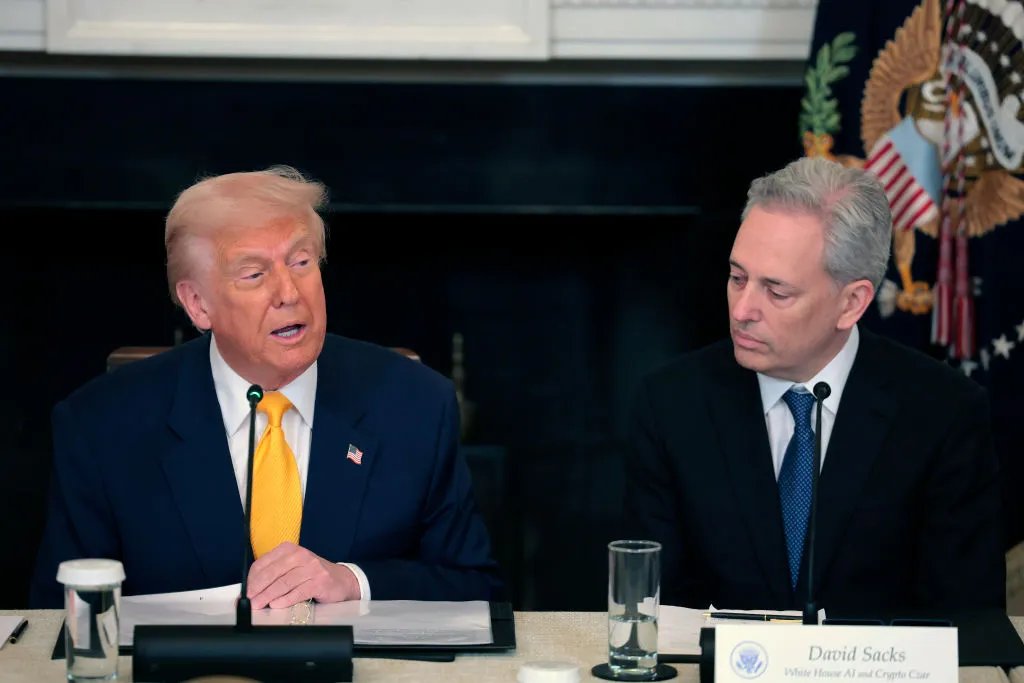

White House Office of Science and Technology Policy director Michael Kratsios said the administration intends to work with Congress in the coming months to transform the framework into legislation that President Trump can sign. Republican House leaders issued a joint statement welcoming the blueprint.

The framework does not endorse or link to any specific piece of pending legislation, leaving congressional staff to translate its principles into statutory text.

Critics were quick to push back. Brendan Steinhauser, CEO of The Alliance for Secure AI, said the framework gives the AI industry broad freedoms with no accountability mechanism for the harms caused by its products.

Democratic lawmakers introduced the GUARDRAILS Act the same day, which would block the administration's effort to impose a moratorium on state AI regulation. Teresa Carlson, president of General Catalyst Institute, offered the opposing view: the framework provides the consistent national standard that startups and scale-up companies have been asking for to build across state lines without navigating conflicting requirements.

On copyright, the framework signals the administration's belief that training AI models on copyrighted content does not in itself infringe copyright law — a position that aligns with arguments made by leading AI companies facing active litigation over their training data.

Read the original reporting at TechCrunch.