Nscale's 'Stargate Norway' Project Targets 100,000 Nvidia GPUs by Year End as European AI Compute Race Accelerates

The British AI infrastructure company's $2 billion raise adds Sheryl Sandberg and Nick Clegg to its board, positioning it for a potential 2026 IPO with OpenAI and Microsoft as anchor customers.

When Nscale announced its $2 billion Series C on Monday, it was not just the size of the raise that attracted attention — it was the depth of institutional credibility assembled around a company that was founded just two years ago. Former Meta COO Sheryl Sandberg, former UK deputy prime minister Nick Clegg, and former Yahoo president Susan Decker will join the board, an appointment package that reads more like preparation for a public offering than a typical growth-stage governance upgrade.

Nscale CEO Josh Payne told the New York Times the company might seek to go public 'as early as this year,' and Goldman Sachs and JPMorgan are already serving as underwriters for IPO preparations. The company's accelerating revenue profile supports the timeline: Microsoft is among its anchor clients, with a deal signed last October to bring 200,000 Nvidia GPUs to three European data centers and one in the United States, in partnership with Dell.

OpenAI is also an initial customer of the Stargate Norway project.

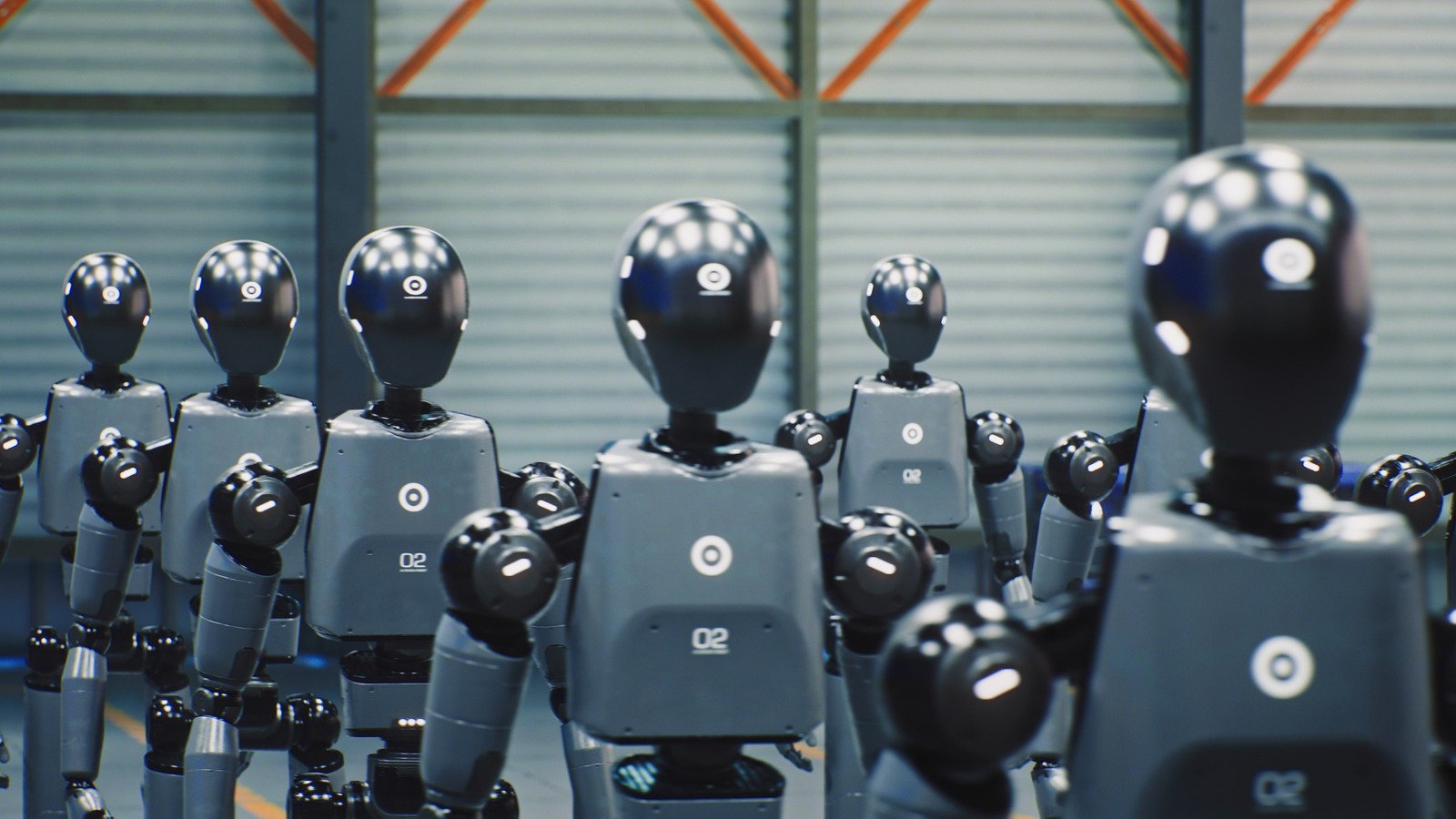

Stargate Norway — a joint venture with Norwegian energy conglomerate Aker, now fully managed by Nscale — aims to operate on 100,000 Nvidia GPUs by the end of 2026, powered by renewable energy from Norway's abundant hydroelectric resources. The project is part of a broader push for European AI sovereignty, as governments and corporations seek to reduce dependence on US hyperscaler infrastructure for sensitive AI workloads.

Nscale differentiates itself through vertical integration: rather than simply reselling cloud compute from existing providers, the company builds and operates its own data centers, procures GPUs directly, and develops its own orchestration software. The approach allows it to offer GPU clusters optimized for AI at lower costs than general-purpose cloud infrastructure, while providing clients with more control over the physical and logical environment in which their models run.

The $1.4 billion delayed draw term loan the company raised separately — backed by its GPU assets — illustrates the capital intensity of this model. Building AI infrastructure at scale requires sustained investment in physical assets before revenue matures, a dynamic that has made GPUs themselves a form of collateral in the emerging AI finance ecosystem.

Read the original reporting at TechCrunch.